Pedestrian

Detection R-CNN.

Leveraging Faster R-CNN architectures to identify and track pedestrians in real-time. A deep dive into two-stage object detection for autonomous safety and urban surveillance.

Framework

PyTorch / Torchvision

Architecture

Faster R-CNN

Base Model

ResNet-50 FPN

Libraries

OpenCV, NumPy

The Vision

In the realm of autonomous systems, the ability to perceive human presence with high confidence is non-negotiable. This project explores the Faster R-CNN (Regional Convolutional Neural Network)workflow to solve the pedestrian detection problem.

Unlike single-stage detectors (like YOLO), Faster R-CNN utilizes a Region Proposal Network (RPN)to separate the detection task into two distinct phases, resulting in superior localized accuracy especially in crowded urban environments.

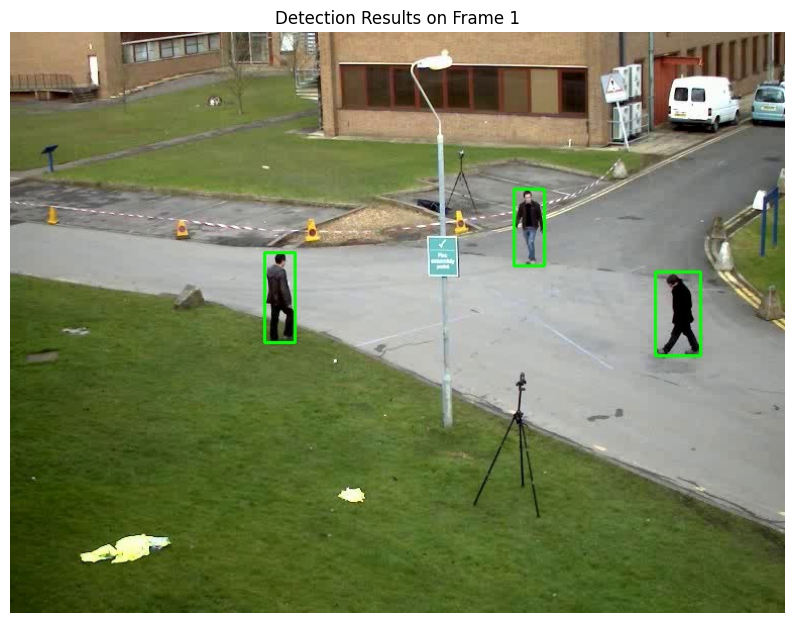

Model Output Visualization

Multi-Target Tracking

Occlusion Handling

Detection Pipeline

Region Proposal (RPN)

The model proposes potential bounding boxes where pedestrians might exist using a sliding window approach over feature maps.

Feature Extraction

Utilized a Backbone CNN (like ResNet-50) to extract deep spatial features from the input frames.

Classification & Refinement

Final stage determines if the proposal is a 'pedestrian' and fine-tunes the bounding box coordinates for pixel-perfect accuracy.

Real-World Applications

Autonomous Safety

Critical for Emergency Braking Systems (AEB) in self-driving cars.

Smart Surveillance

Automated monitoring for restricted zones and public safety analytics.